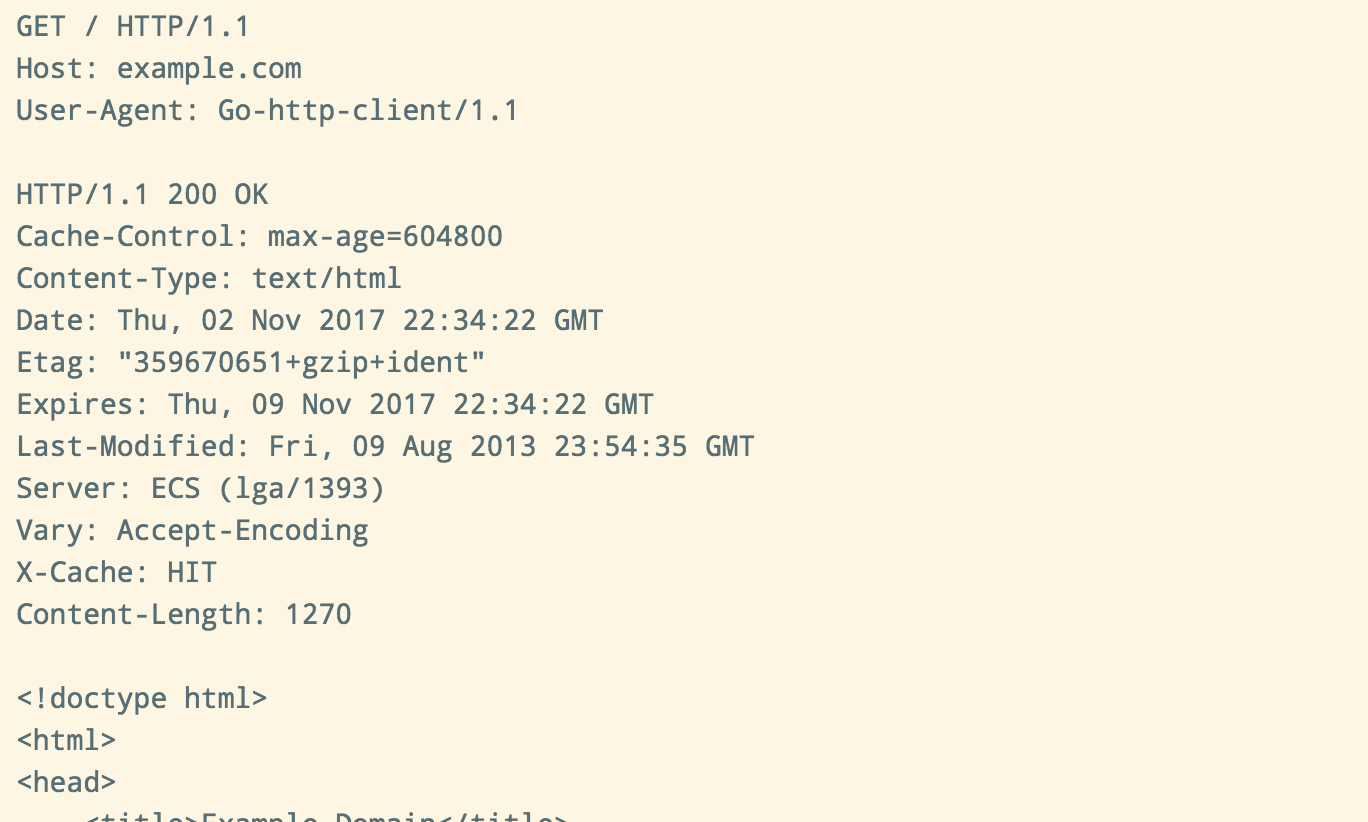

Versions, and was occurring despite the host having a TTL-respecting DNS cache. Was because the backend target used DNS to do blue/green rollouts of new (in part) as a reverse proxy kept proxying the same dead backend endpoints. If that’s theĬase then caveat emptor-I have another piece of anecdata for you.Īt my previous client I had an issue where a high volume Go-based service acting (perhaps even in thanks to the section on connection pooling). I say “by default” because you might have a well-tuned set of reused connections To continually dial that same unchanging couple of domains by default.

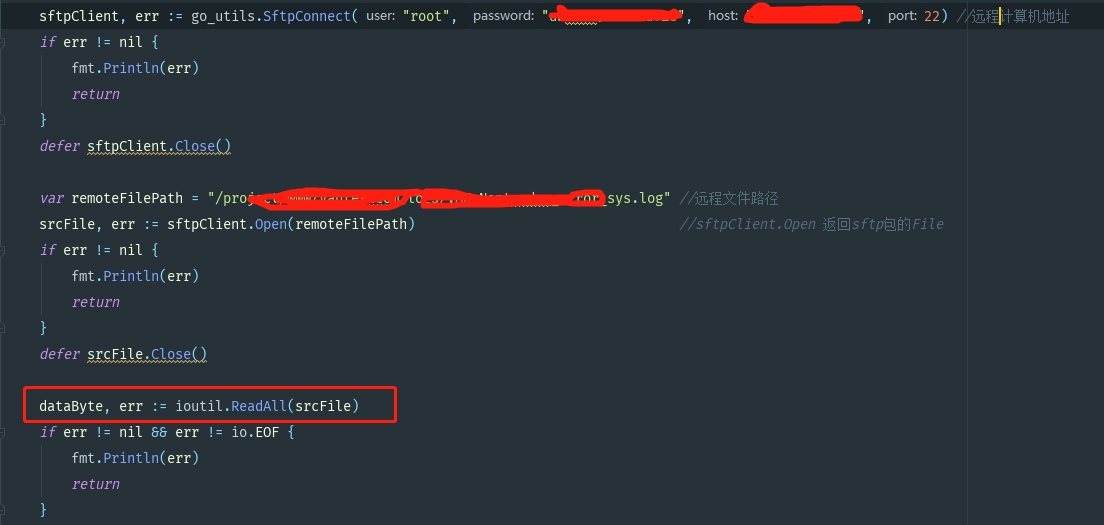

With only a couple of upstream dependencies. You might consider one of these options if you have latency-sensitive services Need to consider overriding if you don’t have full control over your client’s NOTE: There is also the package singleton net.DefaultResolver that you may I can recommend dnscache for this purpose. Http.Transport (as seems to be the general theme of this fieldnote) and wire In /etc/nf and provides no sandbox-local cache server.Īnother option you have in this situation is to override the DialContext on ForĮxample, AWS Lambda’s runtime sandbox contains a single remote Route53 address Worth pointing out that you are not always in control of that situation. Support your DNS caching needs by way of something like dnsmasq. The Go core team’s stance is you should defer to the underlying host platform to Something to always be cognisant of when trying to produce an optimised Go I’m personally thankful for this default after beenīurned countless times by that JVM cache in a past life. Unlike the JVM, there is no builtin DNS cache in the Go standard runtime. Want url.ParseRequestURI and then some further checks if you are wanting to This is a small one, but as far as I’m concerned, the url.Parse method isĮssentially infallible and it trips me up all the bloody time. Slot in use means another connection with the same quadruplet (source addr:port,ĭest addr:port) cannot exist, and this in turn can result in ephemeral portĮxhaustion - the dreaded EADDRNOTAVAIL. In the kernel, but most critically there is the slot in the connection table. (very hard to change in Linux as per RFC793 adherence).Ī buildup of TIME_WAIT sockets can have adverse effects on the resources of aįor one, there is the additional CPU and memory to maintain the socket structure The kernel will keep these around for ~60s Of which being primarily to prevent delayed packets from one connection beingĪccepted by a subsequent connection. The kernel actually transitions the socket to a TIME_WAIT state, the purpose Me in the past, rendering entire hosts useless: closed != closed (in Linux This is of course not optimal, but there is another hidden cost that has bitten There are myriadĬonnection establishment costs: kernel network stack processing and allocation ĭNS lookups, of which there may be many (read about nf(5):ndots:nĮspecially if you run Kubernetes clusters) as well as the TCP and TLS 98 of those 100 connections getįirst things first, the means your service is working harder. Your client is itself also a server part of a larger microservice ecosystem,įorking routines per request it receives). To make requests to the same upstream dependency (this is not so contrived if So take the scenario of 100 goroutines sharing the same or default http.Client

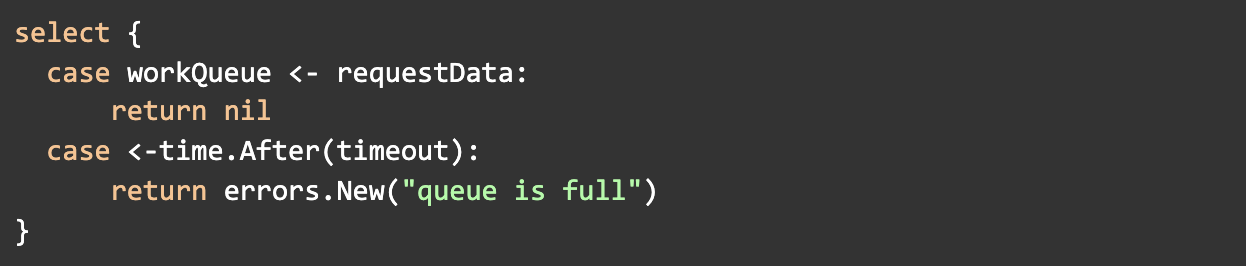

The relationship between these three settings can be summarised as follows: a connection pool is retained of size 100, but only 2 per target host, and if a connection remains unutilised for 90 seconds it will be removed and closed.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed